Your Sovereign Odoo Code Forge AI (v19)

Nubby CLI brings multi-model AI to your terminal. Brain for reasoning, Worker for code generation, Guard for validation — all orchestrated locally.

Caellum's Official Releases

The official release reel for Caellum, presented ahead of the core neural architecture showcase.

See It in Action

Caellum's neural architecture — from concept to code.

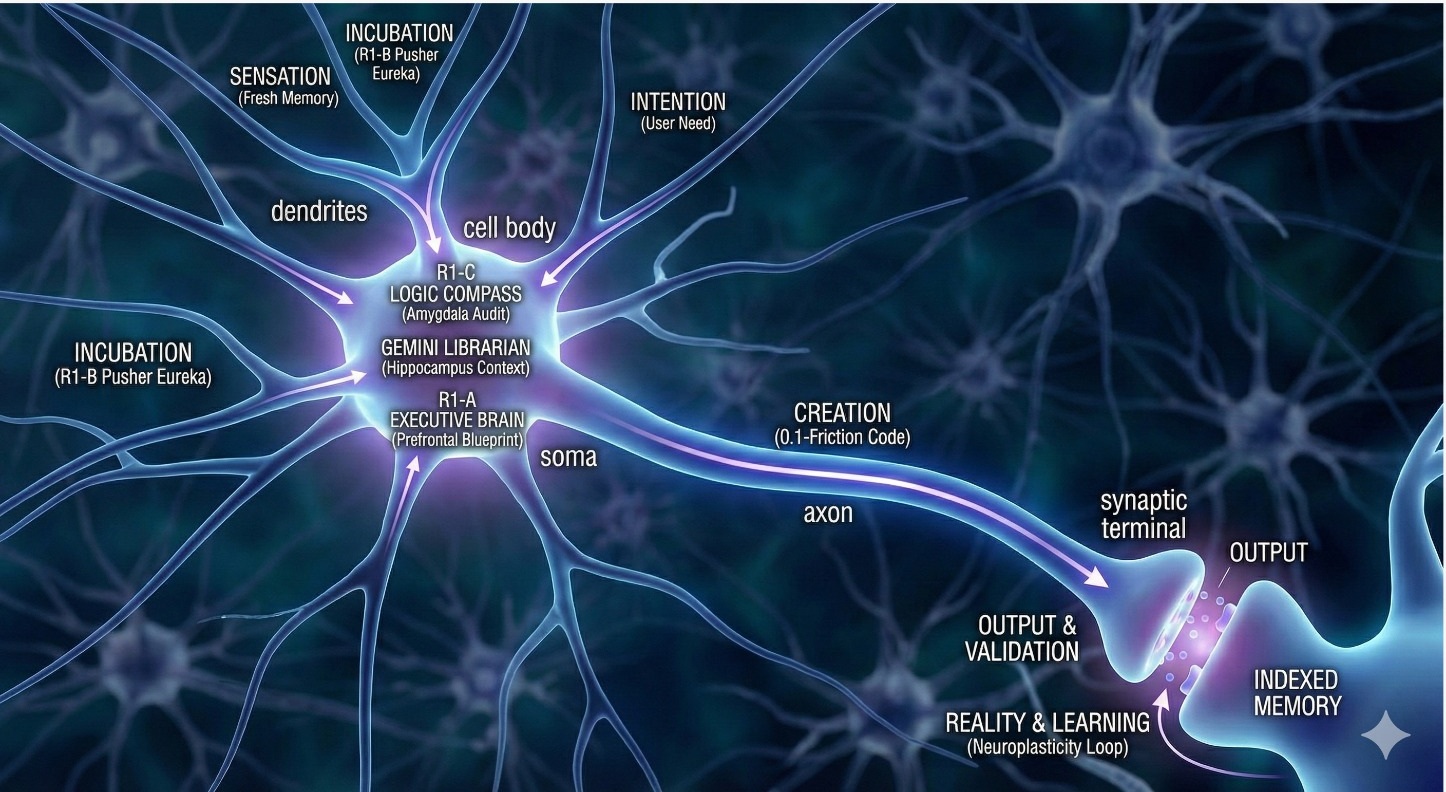

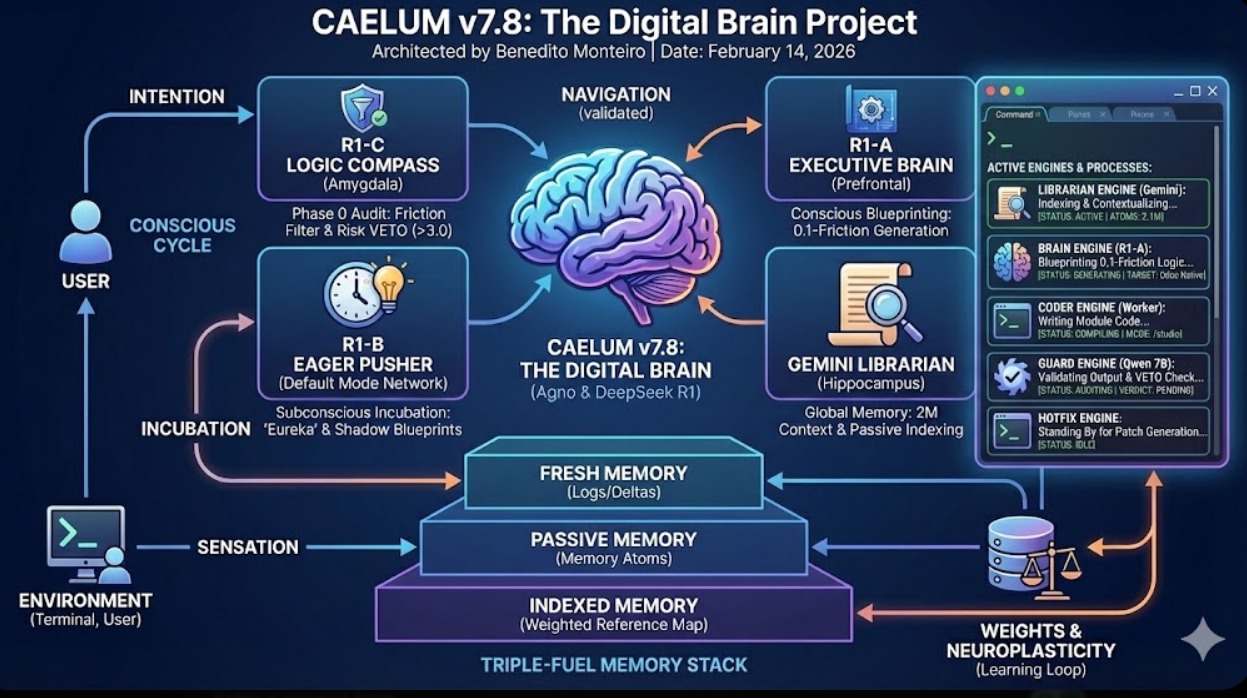

The Digital Brain

Watch how Brain, Librarian, Worker, and Guard engines orchestrate reasoning in real time.

Neural Processing

From intention to validated output — signals flow through Caelum's synaptic architecture.

Eight Agents, One CLI

Soma — Chief Architect

Strategic planning and task decomposition. DeepSeek V3 reasons through complex module architecture before a single line is written.

Librarian — Universe Researcher

Knowledge retrieval with 1M context. Gemini 2.5 Flash reads entire specs, extracts patterns, and researches Odoo Apps/OCA modules.

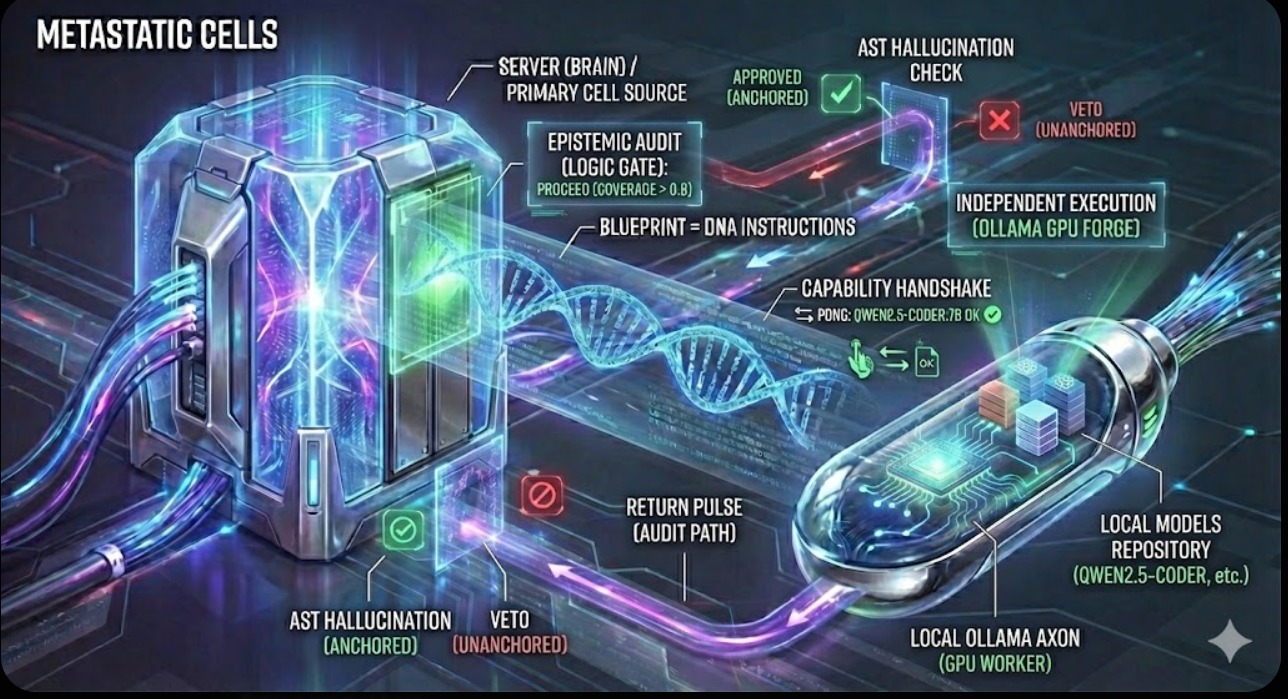

Worker — Builder

Local GPU code generation via Ollama. Qwen 2.5 Coder runs on your machine — zero latency, zero cloud cost, full privacy.

Guard — Sentinel

Security validation at every step. Detects SQL injection, sudo() abuse, missing ACLs. NVIDIA qwq-32b with automatic fallback.

Vision — Visual Analyst

Screenshot-to-code analysis. Reads UI mockups and Figma designs, converts visual intent into OWL components and QWeb views.

Hippocampus — Memory

Long-term knowledge persistence. Manages development memories, session context, and module patterns across conversations.

Council — Deliberation

Architectural consensus. Three Workers generate solutions, the Judge (qwen 3.5 397B) picks the best approach. For critical decisions.

Watchdog — Autonomic

Background health monitoring. Watches Ollama status, model availability, GPU memory, and auto-recovers from failures silently.

Simple, Transparent Pricing

All features included. Pick your scale.

Free

- 25K tokens / day

- ~500 lines of code / day *

- 1 CLI session

- 3 requests / minute

- All features included

Individual

- 1M tokens / day

- ~20,000 lines of code / day *

- Up to 3 CLI sessions

- 30 requests / minute

- All features included

- Priority support

Team

- 10 developer licenses

- Unlimited tokens

- Unlimited lines of code

- Up to 30 CLI sessions

- 60 requests / minute

- All features included

- Dedicated support

* Estimated lines of code based on typical Odoo module development tasks.

Join the Wishlist

Be among the first to access Caelum when we launch.